Foundations of Transformer Language Modeling

约 448 字大约 1 分钟

LLM

2025-11-21

0. Introduction: the mathematical definition of language modeling

Natural language can be viewed as a discrete sequence generated from a finite vocabulary V:

x1,x2,…,xT,xt∈V.

The goal of a language model is to define the probability distribution

p(x1,…,xT)=t=1∏Tp(xt∣x<t),

where x<t=x1,…,xt−1.

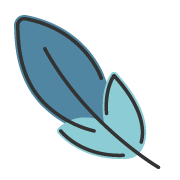

1. The decoder-only Transformer

Modern language models such as GPT and LLaMA typically use the decoder-only Transformer, because it naturally matches autoregressive prediction.

1.1 Input representation: token embedding + position embedding

Each token id is first mapped into a vector:

et=E[xt]∈Rd,

where E∈R∣V∣×d is the embedding matrix.

Because attention by itself does not encode position, we add a positional vector pt:

ht(0)=et+pt.

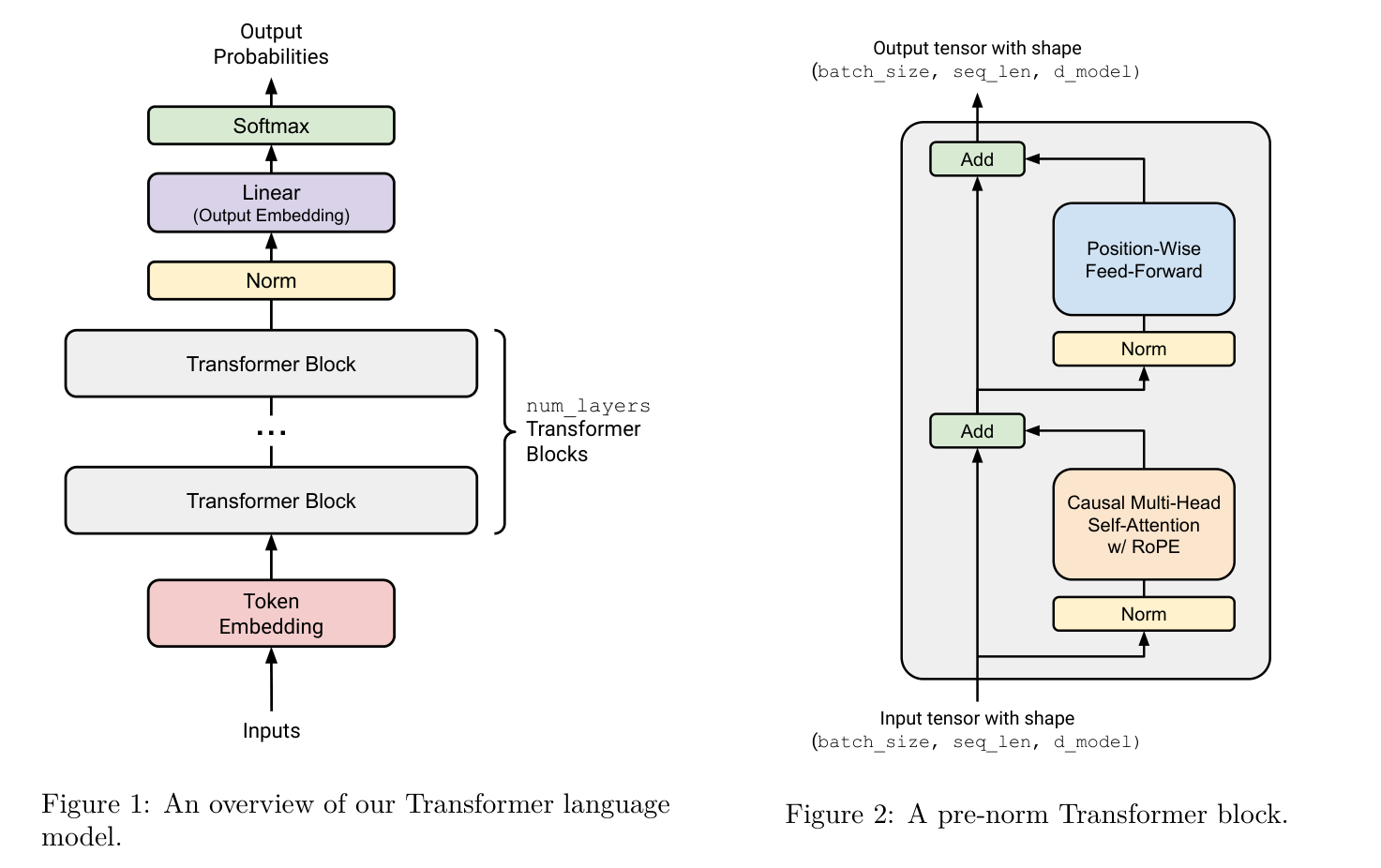

2. The Transformer block

Each Transformer layer contains two main parts:

- masked self-attention

- feed-forward network

with residual connections and layer normalization.

2.1 Linear projections to Q, K, and V

Let the input to a layer be

H=(h1,…,hT)∈RT×d.

We compute

Q=HWQ,K=HWK,V=HWV.

Intuitively:

- Q asks what information I want;

- K describes what kind of information each token offers;

- V carries the actual content that will be aggregated.

2.2 Scaled dot-product attention

The raw similarity matrix is

S=dkQK⊤.

2.3 Causal masking

For language modeling, token t must not see the future. So we apply a causal mask:

Mij={0,−∞,j≤i,j>i.

2.4 Softmax attention

The normalized attention matrix is

A=softmax(S+M).

2.5 Information aggregation

The output at position i is

outputi=j=1∑Tai,jVj.

3. Multi-head attention and FFN

Instead of using a single attention mechanism, the model splits the representation into several heads. Each head can focus on a different type of dependency.

After attention, each token representation passes through a position-wise feed-forward network:

FFN(x)=W2σ(W1x+b1)+b2.

4. Final remarks

If I had to summarize the whole picture in one sentence, it would be this:

A decoder-only Transformer is a machine that repeatedly decides what each token should attend to, then updates the representation accordingly, while never looking into the future.